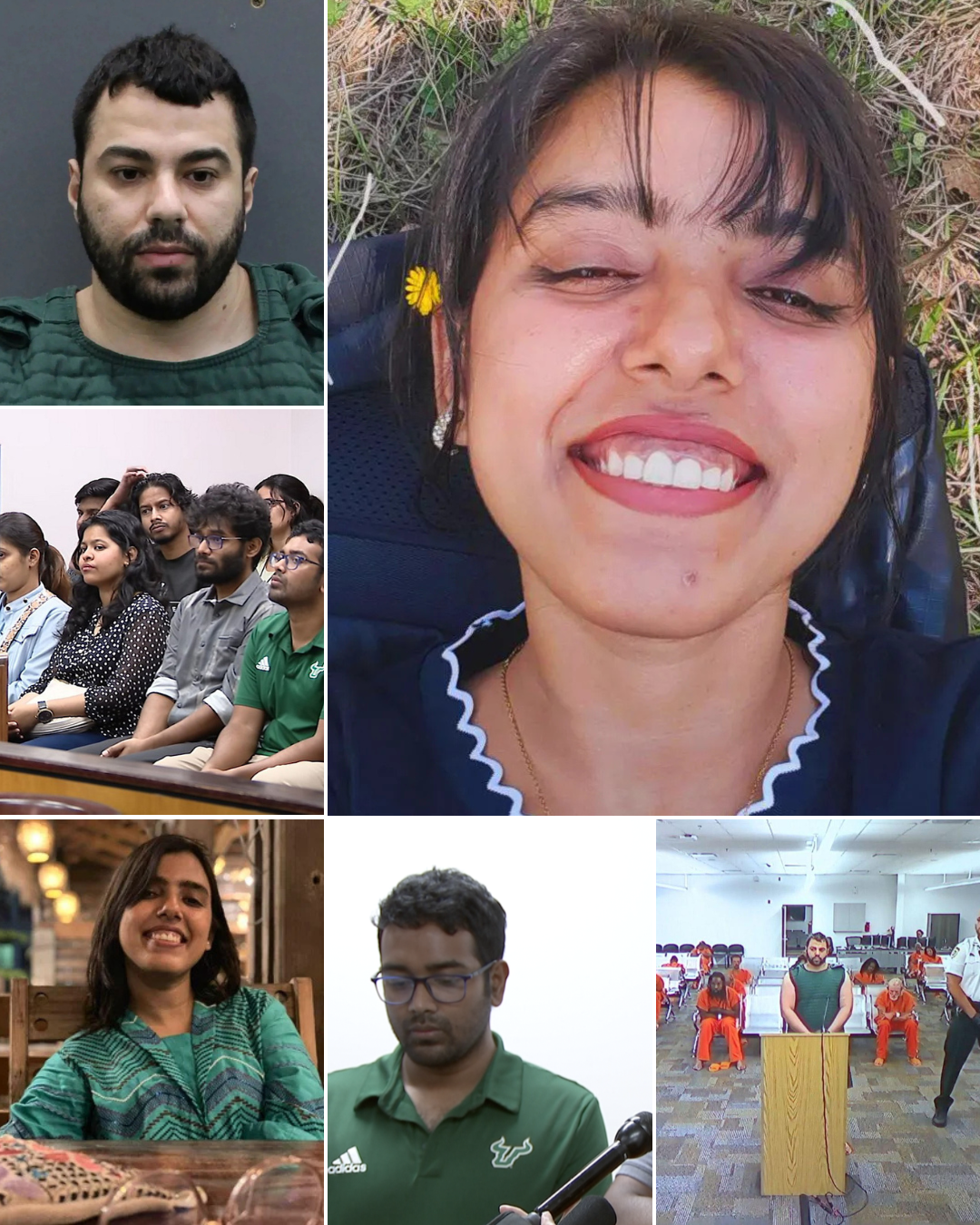

Police have released the suspect’s full ChatGPT history in the case of two missing Florida students, raising chilling questions, along with a notebook found under the bed…

The case involving the two missing graduate students in Florida is shocking not only for its violent nature, but also for an element never before so clearly revealed in criminal investigations: the history of conversations with artificial intelligence. When police and prosecutors released the entire history of exchanges between the suspect and ChatGPT, the story immediately shifted from a typical murder case to a broader debate about how humans plan crimes—and the tools they use to do so.

According to the investigation, suspect Hisham Abugharbieh, 26, is accused of murdering two graduate students at the University of South Florida. But what particularly caught investigators’ attention wasn’t just the criminal act itself, but the series of questions he asked before and after the two victims disappeared. These questions weren’t random; they formed a continuous train of thought, reflecting a systematic process of preparation and concealment. ([WTOP News][1])

Just days before the two victims went missing, the suspect asked ChatGPT a chilling question: what would happen if a body were placed in a garbage bag and thrown in a trash can? This wasn’t mere curiosity—it was a form of behavioral simulation, a virtual “test” before acting in real life. ([WTOP News][1])

Furthermore, subsequent inquiries revealed a clear attempt to cover his tracks. The suspect sought to inquire about changing vehicle identification numbers (VINs), gun ownership regulations, and even whether neighbors might have heard gunshots. ([WTOP News][1]) These questions, when put together, are not just disjointed pieces of information—they form a behavioral map, from preparation and execution to concealment.

It is noteworthy that, according to records, the AI system itself responded that some of the questions “sounded dangerous.” ([WTOP News][1]) But the warning response, while present, was not enough to stop the subsequent chain of behavior. This is where many experts began to question: is passive warning sufficient in the context of pre-criminal queries?

After the two victims disappeared on April 16, the suspect’s string of questions did not stop—but continued in an even more disturbing direction. He asked about the possibility of survival after being shot in the head, asked whether gunshots could be detected, and then the legally charged question: what does “missing endangered adult” mean? ([WTOP News][1])

These questions reveal a clear psychological shift: from the preparatory phase to the consequential phase. If before it was the questions of “how,” then it is the questions of “what will happen.” In criminal investigation, this shift is often seen as a sign of awareness of the behavior that occurred—and an attempt to assess the level of legal risk.

Parallel to the ChatGPT history, physical evidence also gradually emerged. The body of one victim was found in a garbage bag, with multiple injuries, while the other was initially missing and later presumed dead. ([The Guardian][2]) Personal belongings, bloodstains, and movement data all linked the suspect to the scene. In that overall picture, the history of conversations with the AI is not the only evidence—but it serves as a “window of the mind,” helping investigators understand how the behavior was formed.

Another detail mentioned during the search was the notebook found under the bed—a powerful symbolic element. While its specific contents haven’t been fully released, its existence suggests that the suspect’s thought process wasn’t limited to the digital space, but was also recorded in a physical form. The combination of personal notes and AI querying creates a “dual planning” system—a form of repetitive thinking between the real and virtual worlds.

From an investigative perspective, this represents a significant turning point. In previous cases, reconstructing the suspect’s intentions often relied on testimony, witnesses, or indirect documents. But in this case, the conversation history with the AI provides a nearly real-time data stream—a kind of “digital diary” reflecting thoughts before, during, and after the act. This not only strengthens the incriminating case but also changes how law enforcement agencies approach evidence.

However, this very factor also opened up a new era.

The larger debate: where does the responsibility of AI platforms lie? When someone uses a tool to ask questions related to criminal behavior, is that tool merely a neutral instrument, or does it have a responsibility to intervene to some degree? The case has prompted Florida authorities to expand their investigation to include the role of technology companies. ([Axios][3])

This isn’t the first time ChatGPT or other chatbots have appeared in criminal cases. From mass shootings to domestic violence cases, there have been numerous instances of suspects using AI as a reference source. ([Wikipedia][4]) But the difference here is the level of detail and continuity of the query history—a factor that makes it central evidence, rather than just secondary detail.

On a societal level, the case raises a difficult question: is technology changing how people commit crimes, or merely exposing what already exists? A person with criminal intent may seek information from various sources—the internet, books, or personal experience. AI, in this case, is simply a new tool, but with its rapid response and conversational capabilities, it can provide a more direct sense of “guidance.”

However, it’s important to distinguish: the tool doesn’t create intent. The questions the suspect asks reflect a pre-existing state of mind. AI doesn’t initiate behavior, but it can become part of the process of rationalizing or specifying that behavior. This is a point many experts emphasize, to avoid oversimplifying the issue as a “technological error.”

Nevertheless, it’s undeniable that the emergence of “digital footprints” like ChatGPT’s history is shifting the balance in criminal investigations. While previously, a suspect’s thoughts were the most difficult thing to access, now they can be reconstructed through data. This gives investigators an advantage, but also raises questions about privacy and the limits of surveillance.

Ultimately, what makes this case so haunting is not just the violence, but the way it was “constructed” step by step—through questions, assumptions, and experiments in virtual space. ChatGPT history, the notebook under the bed, and physical evidence—all combine to create a picture where the line between thought and action is thinner than ever.

And perhaps the most terrifying thing isn’t the technology itself, but the fact that it allows us to see more clearly what was previously hidden: the process of an intention becoming an action—question by question, step by step, until there is no turning back.

News

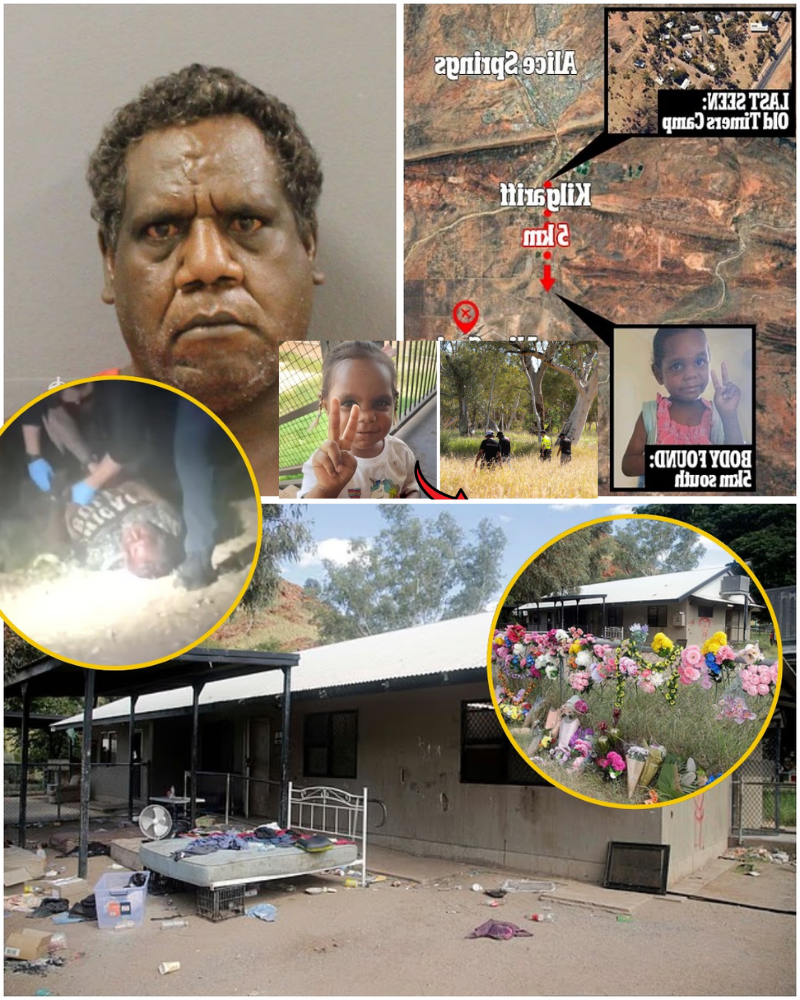

THE TRUTH FINALLY COMES TO LIGHT: A chilling police–suspect exchange in the Kumanjayi Little Baby case exposes what really happened the night the 5-year-old vanished from her bed — all unraveling from ONE LINE that changed everything

The story of the death of 5-year-old Kumanjayi Walker in a remote area of the Northern Territory has long been more than just a criminal case; it’s a test of how the justice system handles truth in vulnerable communities. For months, information surrounding the night she disappeared from her bed was shrouded in ambiguity, conflicting […]

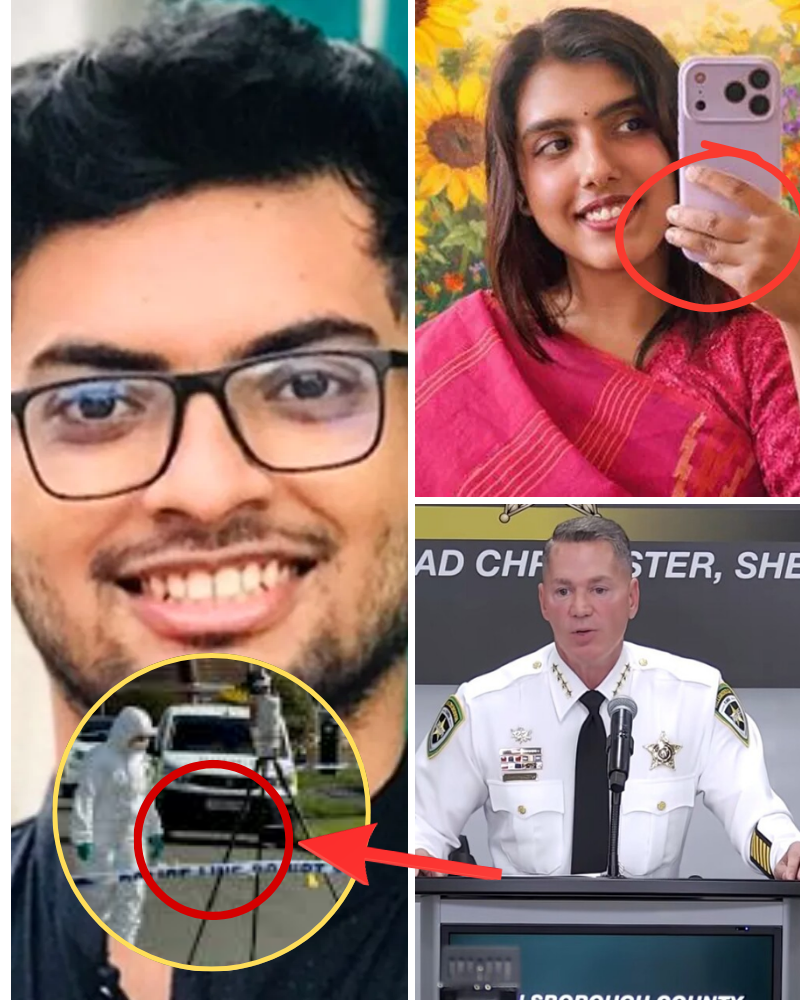

Two University of South Florida students mu-rde-red: Investigation reveals chilling sequence of events – and DETAILS of 17 minutes of disappearance captured on camera leave everyone stunned

Two University of South Florida Students Murdered: Investigation Reveals Chilling Sequence of Crime – and the 17-Minute Disappearance on Camera Footage Stuns Everyone. The case involving two graduate students at the University of South Florida is shocking not only for its violent nature, but also for the way each detail of the investigation has gradually […]

LATEST: Nahida Bristy’s autopsy report is available, police announce the cause of de@th of the graduate student was….

Nahida Bristy’s remains were found on Sunday, April 26 in a nearby county more than a week after she was reported missing from Tampa, Fla. Nahida S. Bristy.Credit : University of South Florida Police Department Authorities in Florida have confirmed a set of remains found last week belong to Nahida Bristy, the doctoral student whose suspicious disappearance has […]

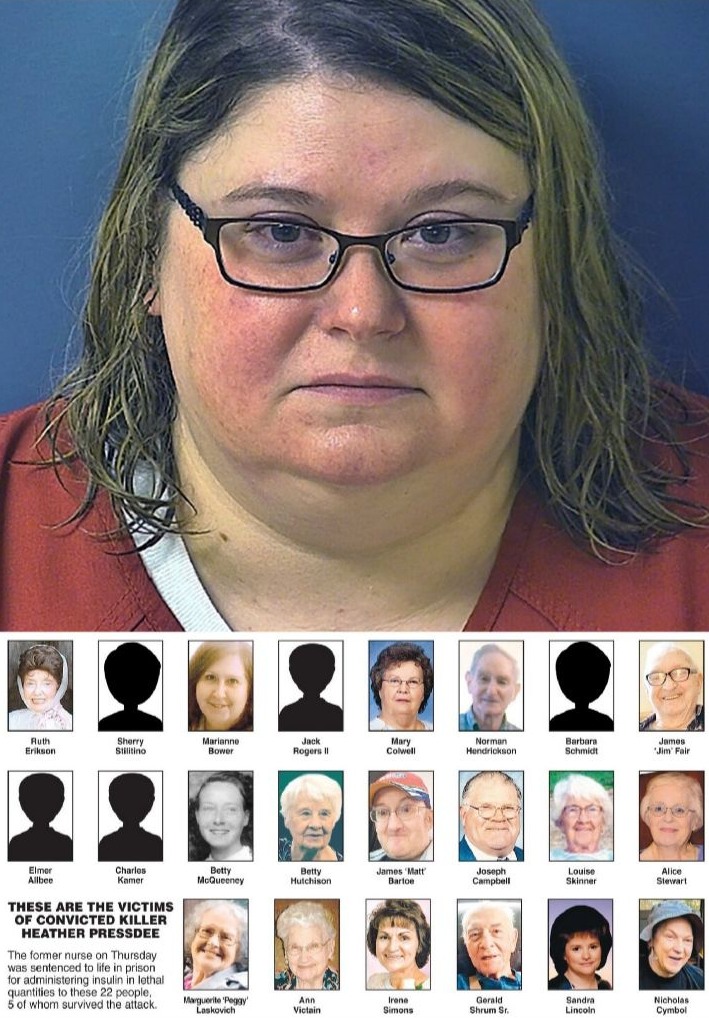

41-year-old Pennsylvania nurse, Heather Pressdee, was recently sentenced to life in prison with hundreds of additional years for k-illing 17 patients with lethal doses of insulin at the nursing homes where she worked.

The Heather Pressdee case not only shocked Pennsylvania but also sparked a wide-ranging debate about the flaws in the healthcare system—particularly in nursing homes, where patients are often most vulnerable. When a nurse—a person tasked with caring for and protecting lives—is sentenced to life imprisonment plus hundreds of years in prison for murdering patients with […]

The story of Pedro Rodrigues Filho begins with a childhood that many cannot imagine. While still in his mother’s womb, he suffered brutal abuse from his own father—to the point that his body bore the marks of trauma from birth. The tragedy did not end there, as his father later took the life of his wife in a shocking act.

The story of Pedro Rodrigues Filho begins with a childhood many would find unimaginable. Even in the womb, he suffered brutal abuse from his own father—to the point that he was born with visible injuries. The tragedy didn’t end there, as his father later took his wife’s life in a shocking act. Years later, after […]

Around 10 p.m., Madeleine’s mother returned to the room and found the window open and the bed empty. The two children were still asleep, but Madeleine was gone…

Nineteen years ago, on a night like this, a three-year-old girl disappeared from a holiday apartment in southern Portugal—the beginning of one of the most sensational disappearances in the modern world. That girl was Madeleine McCann. She disappeared during a family holiday at Praia da Luz on the evening of May 3, 2007. That night, […]

End of content

No more pages to load